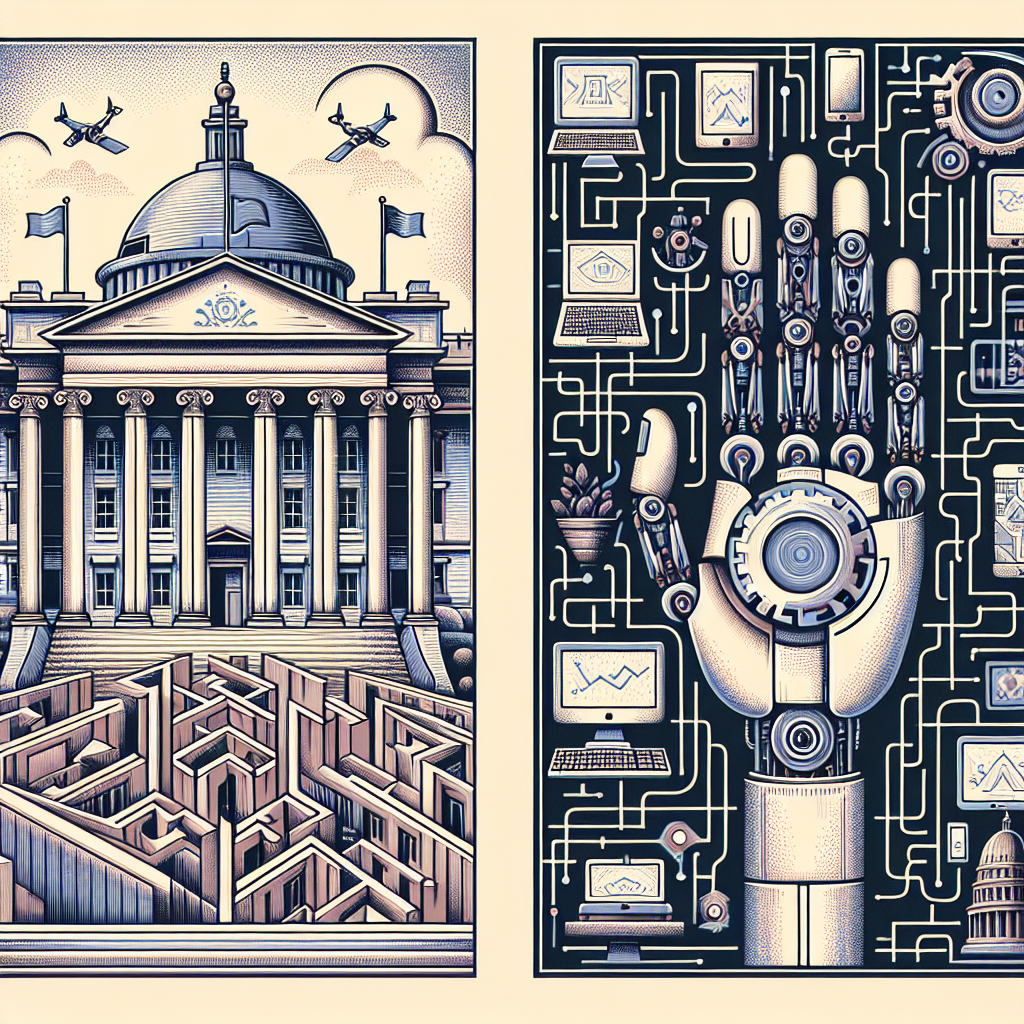

Artificial Intelligence (AI) has the potential to revolutionize the way government agencies operate, improving efficiency, reducing costs, and enhancing the quality of services provided to citizens. However, implementing AI in government agencies comes with a unique set of challenges that must be addressed to ensure successful integration and deployment.

One of the main challenges of implementing AI in government agencies is the lack of expertise and resources. Many government agencies do not have the necessary technical expertise to develop and implement AI solutions, nor do they have the budget to hire outside experts. This can make it difficult for agencies to effectively leverage AI technology to improve their operations.

Another challenge is the complexity of government processes and regulations. Government agencies must navigate a complex web of regulations and procedures, which can make it difficult to integrate AI systems into existing workflows. Additionally, government agencies must ensure that AI systems comply with data privacy and security regulations, which can be a daunting task.

Another challenge is the potential for bias in AI systems. AI algorithms are only as good as the data they are trained on, and if the data is biased, the AI system will also be biased. This can have serious implications for government agencies, especially in areas such as law enforcement and healthcare, where biased AI systems could lead to unfair outcomes for certain groups of people.

In addition, there are concerns about the impact of AI on jobs in government agencies. Many fear that AI systems will automate tasks currently performed by human workers, leading to job losses and displacement. Government agencies must carefully consider the impact of AI on their workforce and develop strategies to retrain and redeploy workers whose jobs are at risk of being automated.

Despite these challenges, there are also many opportunities for government agencies to benefit from AI technology. For example, AI can help government agencies automate routine tasks, freeing up human workers to focus on more complex and high-value activities. AI can also help government agencies analyze large amounts of data more efficiently, enabling them to make better-informed decisions and improve the quality of services they provide to citizens.

To address the challenges of implementing AI in government agencies, it is important for agencies to develop a clear strategy for integrating AI technology into their operations. This strategy should include a plan for acquiring the necessary expertise and resources, as well as a framework for ensuring that AI systems comply with regulations and ethical standards. Agencies should also prioritize transparency and accountability in their use of AI technology, ensuring that decisions made by AI systems are fair and unbiased.

In conclusion, implementing AI in government agencies presents a unique set of challenges, including the lack of expertise and resources, the complexity of government processes and regulations, the potential for bias in AI systems, and concerns about the impact on jobs. However, with careful planning and strategic implementation, government agencies can overcome these challenges and harness the power of AI to improve their operations and better serve the public.

FAQs:

Q: What are some examples of AI applications in government agencies?

A: Some examples of AI applications in government agencies include predictive analytics for law enforcement, chatbots for citizen services, and natural language processing for document classification and search.

Q: How can government agencies address concerns about bias in AI systems?

A: Government agencies can address concerns about bias in AI systems by ensuring that training data is representative and diverse, regularly auditing AI systems for bias, and implementing transparency and accountability measures in the use of AI technology.

Q: How can government agencies ensure that AI systems comply with data privacy and security regulations?

A: Government agencies can ensure that AI systems comply with data privacy and security regulations by implementing robust data governance policies, conducting regular security audits, and obtaining necessary certifications and accreditations for their AI systems.

Q: What are some strategies for retraining and redeploying workers whose jobs are at risk of being automated by AI?

A: Some strategies for retraining and redeploying workers at risk of being automated by AI include providing training programs for new skills, offering career counseling and job placement services, and creating opportunities for workers to transition to new roles within the organization.